Music Video: Back Bones + Filming in 3D, Part 1

It’s been a while since I posted new updates and additions at Big Head Amusements, so I thought I’d start with some test footage for an upcoming music video that will be in both flat and 3D. Before I get into some details, however, here are some quick notes on current projects.

Film-wise, I should be hearing back over the next month from festivals regarding the acceptance or rejection of BSV 1172, so fingers crossed it gets into another festival, and maybe one in hometown Toronto.

I’m being selective with festivals because BSV 1172 is part narrative, part experimental, making it a tough fit, and submission fees often range from reasonable to outright insane.

Way back around 1991 I had three shorts, and in those pre-internet days the submission process was a bit different. The Canadian Film Development Corporation provided (for free) a modest printout of festivals around the world, making it easy to find local and international possibles. After the submissions phase was over, I figured I’d spent maybe $500 U.S. on fees, but in the early 1990s there weren’t any Early Bird, Regular, Late, and Last Chance deadlines with escalating fees; you either made the deadline or missed it entirely.

Paying in USD is still the norm, and the odds of getting in were probably affected by the same factors today, but what surprises me now is when a smaller, newer, festival charges more than rival veterans, especially in short film & experimental categories. If your mandate is to showcase indie works often overlooked by the majors, charging more is a huge disservice to the many filmmakers working on much tighter budgets. Moreover, if you’re pitching yourself as the feisty, rebellious alternative to the majors, your price point can’t be in line or exceed your rivals, nor top dog TIFF.

My submission cut-off for BSV 1172 will likely be around mid-summer because that falls within my budget, and the time-frame before it’s available to stream and download.

Moving on…

… Back in December, I posted another ArtScopeTO Q&A with local artists on Vimeo and YouTube, but its belated publishing temporarily shelved a blog on the creation of its visuals, so expect a piece later this month, with some stock footage that look kinda like this:

Now to the 3D test footage…

I did a few tests last week to see if it was possible to create 3D footage using an old Saticon 3-tube camera, going on the notion that misaligned tubes might be able to replicate strapping two identical cameras side-by-side on a tripod.

Now you ask… Why do this?

3D is supposed to replicate our perception of objects, and a present day, homemade set-up involves placing two genlocked / interlocked cameras with a gap that mimics the distance between our own beady little eyes.There are numerous videos on YouTube, but it also requires getting pro DSLRs that have genlock capabilities – an option reserved for pro gear, not consumer grade. You’re also dealing with two cameras offering double footage and double storage, so the memory card capacity and hard drive space will be huge, especially in HD.

There are also ways in which footage can be digitally tweaked to fake 3D, but that’s presuming you’ve shot footage with elements that will convey 3D once they’ve been re-oriented, or sliced up and restaged to evoke 3D with software. I’m not a fan of post-rendered 3D, and like to get as much of the hard work done in-camera to save on time and headaches.

So how does a 30 year old tube camera fit in?

Let’s jump back a bit. In the 1980s, ENG videographers had to make sure their cameras had proper white balance, black balance, and registered or aligned tubes before auto-alignment appeared on later cameras.

I’ve a Sony DXC-M3 that was among the first cameras to offer auto-registration, and an earlier JVC KY-1900CH that has none, As recalled by Philip Johnston in his nostalgic blog entry from 2012, you set up the camera with a registration chart, and using a jeweler’s screwdriver, turned each of the four potentiometers to adjust the blue and red tubes vertically and horizontally until the chart has no halos – just a clean pattern.

If the image was off – which could reportedly happen from disuse, regular use, or transportation – you saw a red or blue halo. You wanted to make sure the tubes were aligned because there’s no way to remove those halos, except with custom software that didn’t exist at that time.

(In the early 1990s, I co-directed a corporate promo that had very fucked up halos stemming from the original S-VHS footage. It was common problem with the format where each subsequent generation or copy would further exaggerate colour misalignments, and we spent a pretty penny at a post house to fix it.)

The 4 pot alignment were meant to fix vertical and horizontal displacements… but I figure they could also be used to create specific displacements, perhaps mimicking two interlocked cameras for 3D cinematography.

My original plan was to test this out using an Ikegami ITC-735 camera because of its superior optics and crisp images, but I noticed the blacks were extremely weak. Either it required a huge amount of light (which is often the case) or some tweaking using the internal potentiometers to fix low black levels, so for now, that camera is out.

I decided on the JVC KY-1900 because its colour reproduction is very robust, and more importantly, you could misalign the red and blue tubes much farther than the 735. After a series of early tests shots, I set up objects in the kitchen, going for colour and distance to see what stayed in focus, and what might convey a sense of 3D.

I also donned red-blue anaglyph glasses before shooting each take to make sure the camera seemed to convey a 3D image, whereas minus the glasses, everything looked trippy. That footage was dropped into Premiere timeline, takes were edited into a straightforward montage, and several renderings were done to see where colours, brightness, and layers had to be adjusted.

Here’s the end result, for which you’ll need red-blue glasses to see for yourself whether misaligned tubes can capture 3D footage:

On Vimeo:

On YouTube:

There are only 2 tracks – the red and the blue – but it became very clear a lot of fiddling was required in Premiere, in terms of adjusting brightness & contrast, RGB level tweaks, and slight shifting of the tracks so things aren’t too misaligned, resulting in an overall blurry image.

There’s also a huge brightness disparity between looking at the footage with and without the glasses, hence Test #2 will focus on how much light is required to lessen adjustments in Premiere. That’ll be followed by finding the right focal planes so multiple objects instead of just one are sharp, and convey spatial distances.

The music video will neither include a kitchen, a bowl of fruit, nor pots and pans; Test #1 is purely to see if this idea might work, and where there are issues in brightness and accurate colour reproduction. I read in one online piece that with anaglyph 3D, reds can be black or greyish, and I wonder if that’s a lighting issue, or an inherent flaw in this particular system.

The oddest benefit was how the glasses minimized video grain and rolling AC lines from a bad connection during filming. The glasses make the footage seem weirdly sharper, which shouldn’t be the case, since this is a straight SD uncompressed AVI export of the same 720×480 footage.

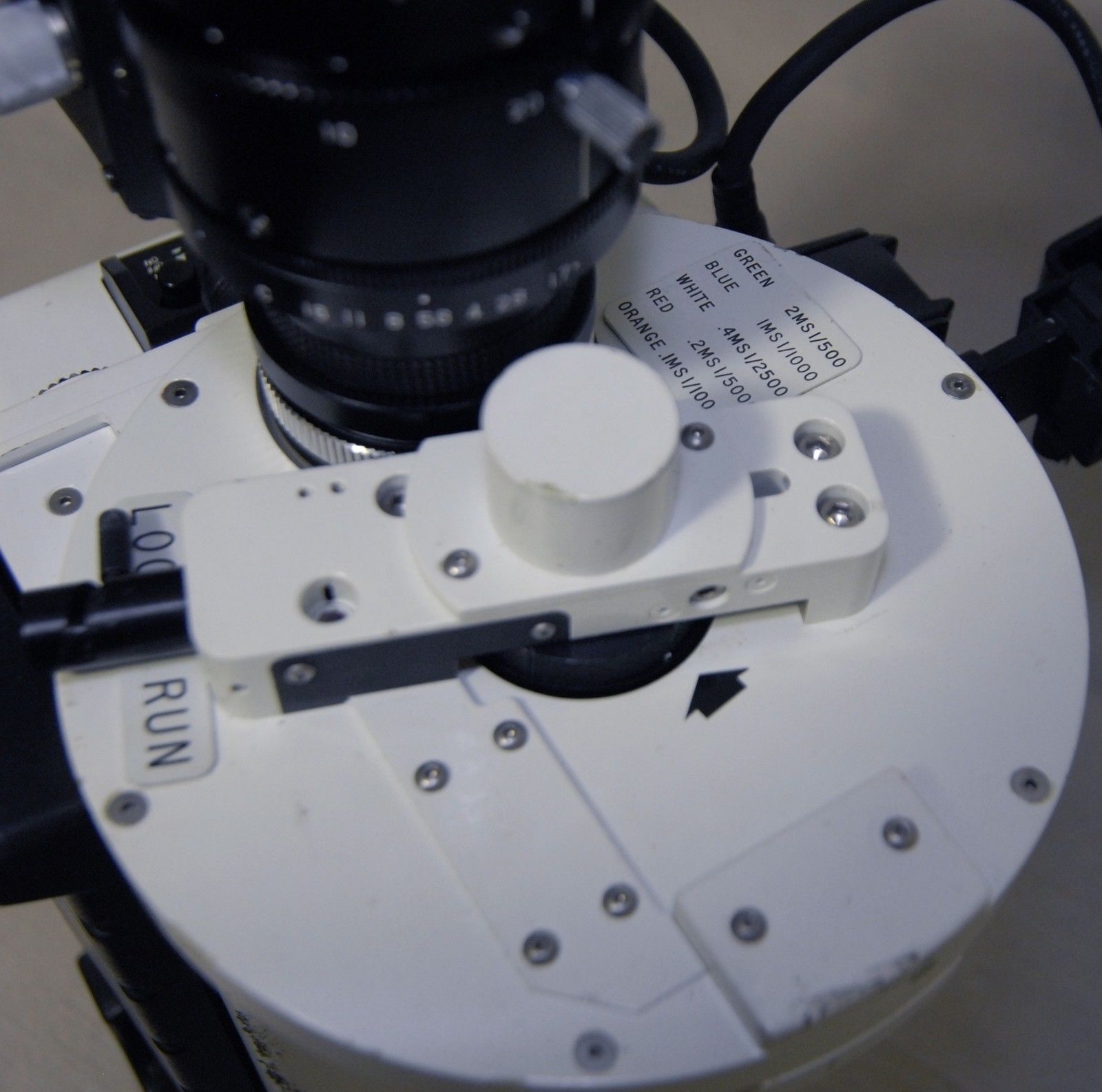

Another facet I have to test is whether I can film billowing smoke in 3D, using a ‘scientific’ camera that has a shutter wheel with options to film from 1/500 to 1/10,000.

It’s also a JVC KY-1900 model rebuilt to shoot fast motion. I’ll post some prior test footage of bubbling water, but this particular camera requires a ridiculous amount of light if you’re shooting at 1/10,000, and it’s the heaviest camera I’ve ever held.

Thanks for reading,

Mark R. Hasan, Editor

Big Head Amusement